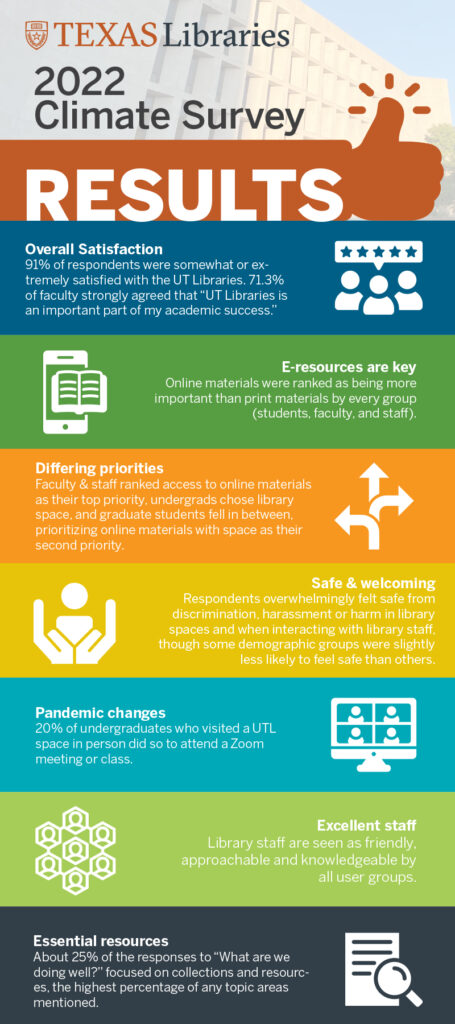

Library work involves simultaneously preserving the past, meeting needs in the present and trying to predict the future. Since there is no crystal ball, libraries rely on tools like surveys to help us monitor needs and make predictions. As previously noted, the Libraries conducted a campus-wide survey of students, faculty and staff in spring 2022. The results have now been analyzed, and largely reaffirm that we are serving campus needs while pointing us toward areas where we can do even better.

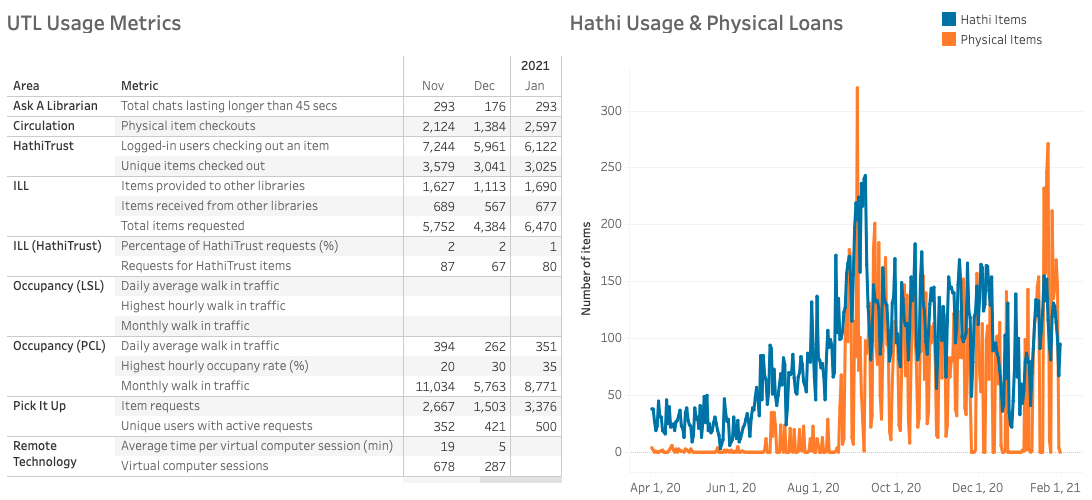

Following trends from the past decade, faculty and staff rated access to online materials as their top library priority and undergraduates ranked library spaces as most important. Grad students have continued to prioritize online materials throughout the past decade, while library space has emerged as a secondary priority over the past 5 years. Throughout the past 10+ years, students and faculty have continued to display a shift in preferences toward digital materials. This shift, however, is not complete. While ranking online materials as being more important than physical materials, 48.5% of respondents still reported that they prefer print materials to electronic resources. This is despite the fact that 96% of faculty reported that online materials are “very important,” compared to only 50% who rated physical materials as “very important.” These somewhat contradictory results, combined with usage statistics, paint a complex picture in which users value physical materials, but are more and more likely to use digital materials to fulfill their information needs.

I use UT Libraries all the time to access materials for my work, it is invaluable. I could not do my work without UT Libraries, including the on-campus collections and materials available through Interlibrary loan. -College of Liberal Arts Graduate Student

Happily, since collections are of high importance to every user group, results show that users are largely satisfied with our library collections. In fact, about a quarter of the responses to an open-ended “What are we doing well?” question focused on collections and resources, the highest percentage of any topic areas mentioned. 87% of respondents agreed that “UT Libraries gives me access to the resources I need to achieve my academic goals.” A student from the College of Natural Sciences stated, “As a graduate student I am constantly searching for articles on my research topic. With so few journals being open access, I literally could not do my work without UT Libraries!” The Get a Scan service and Interlibrary Loan are also highly appreciated, ranking in the top three priorities for faculty, staff, and graduate students.

“They provided me with the space and kindhearted welcomes to come into the library and study for a huge test.” -Moody School of Communications Undergraduate Student

We were pleased to see that 87% of respondents agreed that they “feel safe from discrimination, harassment or harm in library spaces and when interacting with library staff.” This continues a trend of decreasing worries about safety following concerns seen in the 2012 campus survey. An undergraduate business student noted, “During finals season I needed a safe space to study when I could in my dorm room. The only place open closest to me was the PCL which was perfect that night.” Additionally, 86% of respondents agreed that UT Libraries is a welcoming place. Demographic breakdowns, however, show us that those who identify as black or African American, or nonbinary in gender identity, were slightly less likely to agree that they feel safe than the overall group. With the Libraries’ focus on IDEA (Inclusion, Diversity, Equity and Accessibility) including a recently adapted IDEA Action Plan, we hope to close that gap so that everyone feels safe and welcome in library spaces.

“My classes that I take make access to technology a must. The libraries on campus provide me with access to tools I would never have to opportunity to own. Tools such as 3D printers, book scanners, adobe software, and much more. Without the library I would either had to have dropped out of college and be thousands of dollars in debt.” – College of Liberal Arts Undergraduate Student

Results also showed how much library users value the expertise, kindness, and labor of library staff. Library staff are seen as friendly, approachable, and knowledgeable by all user groups. In fact, staff comprised the second largest category of “What are we doing well?” responses. “In my UGS, a librarian came to explain to us how to use the library website nad how to find the sources we would need to conduct academic research,” shared an undergraduate from the College of Communication. “It was extremely helpful and I have since used these tips and skills in all of my classes!” Faculty especially value staff expertise – research support from a librarian is ranked as the third most important service by faculty respondents. Library web pages (research guides) for a subject area or course, which are designed and curated by librarians, were ranked within the top five priorities by every user group.

“Our librarian helped us research resources to unpack racial bias in grading and writing and coordinated with us and the writing center and writing flag staff to hold faculty meetings around this topic, The resources our librarian found were immensely helpful as were their contributions to our conversations.” -School of Social Work Faculty Member

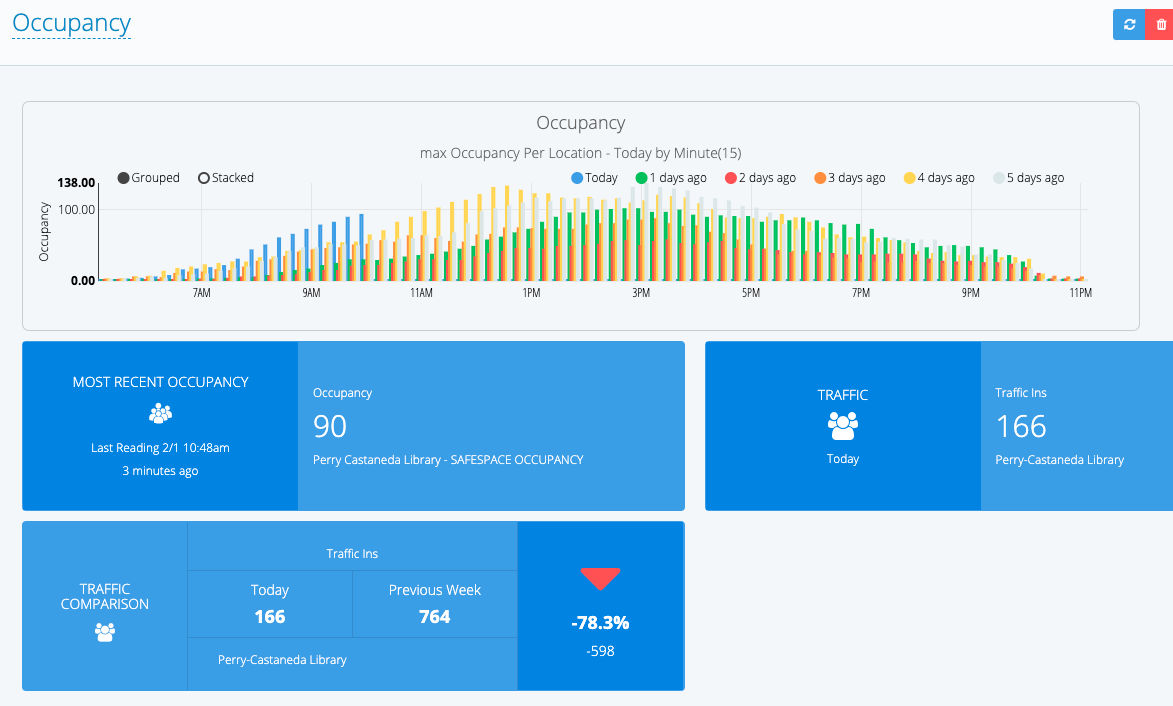

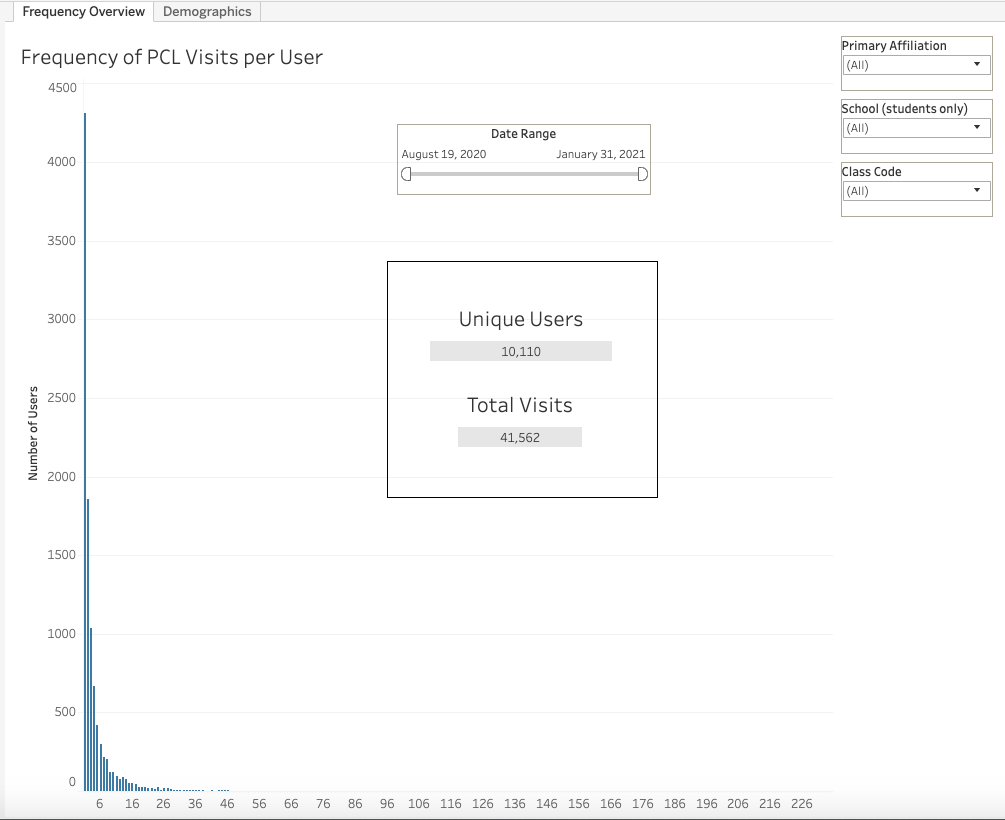

While it’s nice to receive confirmation of what we’re doing well, it’s also important to look for ways we can better serve the campus community. In an open-answer “What can we do better?” question, navigation and wayfinding were often mentioned as areas that the Libraries can improve. One example of a task that some users currently find difficult is the process of finding a book on our website and then locating it in the stacks. Under the guidance of our UX Designer, we’re working on a project to improve signage on the entry level of PCL and will eventually move toward improving navigation and wayfinding at large. We also noted that the increase in remote learning and work brought on by the pandemic has possibly introduced a new need, as 20% of undergraduates who reported visiting a UTL space did so in order to attend a Zoom meeting or class. Individual space to attend online meetings is consistently being mentioned as a recent desire both anecdotally and through more formal assessments.

“Essential in a chronic sort of way. No single event represents it. ” -College of Natural Sciences Faculty Member

While survey results are useful for confirming suspicions, tracking trends, and uncovering areas of interest, they also raise further questions. Respondents frequently expressed a lack of awareness of the Libraries’ communication channels, and undergraduate responses showed that there is room for improvement in outreach. We will follow up on these findings by doing further research to untangle where the disconnects are and what we can do to better reach all users. The results will continue to provide rich fodder for ongoing planning, and rather than being satisfied with the positive findings, the Libraries will continue to strive toward continual improvement so that everyone on campus can succeed. An undergraduate student from the College of Liberal Arts summed up why we do what we do, stating that “UT Libraries are essential to the accomplishment of my academic work.”